Outsourcing vs nearshoring vs offshoring vs remote teams vs inhouse

Some background on the definitions we are using for “Outsourcing” in this article.

https://www.netguru.co/blog/outsourcing-vs-nearshoring-vs

http://www.diffen.com/difference/Offshoring_vs_Outsourcing

Offshore outsourcing is the practice of hiring a vendor to do the work offshore, usually to lower costs and take advantage of the vendor’s expertise, economies of scale, and large and scalable labor pool.

This article will be focused on “Outsourcing” in the way most see it, which is software being developed by a 3rd party company on the other side of the world.

The lessons learned we have taken from one of customers who applied these strategies to a remote team that they managed as an internal delivery center, but the strategies can be applied to offshore and outsourced application development in the same way.

Here are some just some examples of why we need to think about how we go about outsources and offshore projects.

Apologies if you work for anyone of these companies listed in the following links.

http://www.lessonsoffailure.com/tag/outsourcing/

Nearly 50% of outsourced projects fail outright, or fail to meet expectations.

http://www.itproportal.com/2015/12/19/five-of-the-biggest-outsourcing-failures/

IBM – Queensland government contract for $6 million end up costing $1.2 billion

http://www.bloomberg.com/news/articles/2007-01-23/india-software-outsourcing-one-unhappy-customer

Both of these projects will have to be rewritten sooner or later.

In the end, this cost us more and we were throwing money away.

So how can we avoid these failed projects ?

So there are a lot of lessons to be learned on the different facets of outsourcing, the main ones are:

- picking appropriate projects to be outsourced

- establishing goals

- vendor selection

- contract management

This article will focus on one particular facet of outsourcing management. That is, once you start building applications, managing the code quality of the delivered application/s.

The below contains referenced articles where someone has previously described their ideas, thoughts and suggestions. I have picked some relevant points around ensuring quality. We are then going to explore some tools and strategies that we can use in our project work to put these suggestions to use in real world.

https://blogs.oracle.com/sathyan/entry/top_20_risks_in_outsourcing

| 3 | Process and Quality standards incompatible with vendor. | Agreed upon standards and processes must be part of the binding contract. |

| 9 | Process non-alignment and differing governance model. | Establish compatible and agreeable processes and include them as part of the contract. |

| 13 | Lack of control or insight into vendor progress. | Well planned milestones, immediate deliverables along with appropriate documentation plan. |

http://www.yegor256.com/2014/12/18/independent-technical-reviews.html

Review From Day One. Don’t wait until the end of the project! I’ve seen this mistake many times. Very often startup founders think that until the product is done and ready for the market, they shouldn’t distract their team. They think they should let the team work toward project milestones and take care of quality later, “when we have a million visitors per day”.

http://www.yegor256.com/2015/05/21/avoid-software-outsourcing-disaster.html

Again, keeping the age-old principle in mind, I would recommend that from the first day of the project, you establish a routine procedure of checking their results and expressing your concerns.

So the take away from this is, I think, that quality is important and that it doesn’t just happen.

What tools are there to help us achieve quality?

Version or source control, ie seeing your code.

If you’re not watching the code, you can’t manage it.

There are many choices:

GIT

SVN

Perforce

Mercurial

TFS

Sourcesafe

Each has strengths and weaknesses. The main point is choose one, so that you can make sure all your involved parties can see the code.

Tools that can help:

Fisheye

This lets you look at your code and see where it is changing and by whom.

SonarQube

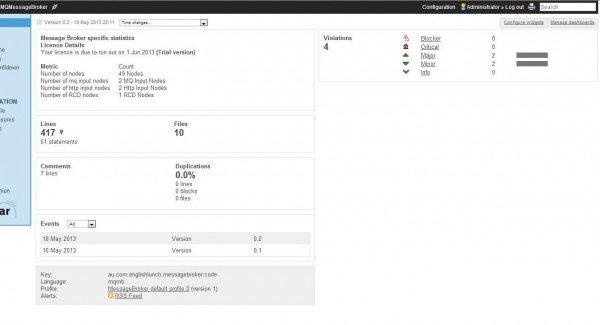

Is a tool for managing code quality, and which also happens to be the basis of this article. Usage of SonarQube merges the two points listed above. It allows continuous code inspection automatically, which means that you can review from the start and you can make the reviews automatic and part of the process or the routine of the project.

So now we have tools to “see” our application code. I say “our” application code, because at the end of the outsourcing contract, you will own the code. Which is another reason we are concerned about it’s quality. We don’t want to take ownership of rubbish.

Next, we want to address how we make the reviewing of code quality part of the procedure.

For this we have manual code reviews and automatic code reviews.

Manual code reviews, which you can manage remotely with a “Kanban” board such as Lean Kanban Board is where people are involved on reviewing the code that others have written. These can be performed internally withing the team (peer review) or externally by a separate party.

Using a Kanban board helps you manage developer tasks, so you add a task for the developers to do a code review on new code, if they are not already doing peer reviews. There are various pro’s and con’s of manual reviews, but for the purpose of this article we will take it as a given that some code review is needed.

Another way of reviewing code quality is automatic code reviews. Which brings us back to SonarQube again.

Sonarqube can be added as part of your development process to automatically scan your code for a variety of coding issues.

If we have SonarQube doing automatic reviews do we need manual reviews internally and externally ?

Yes. They focus on different ends. SonarQube tends to pick up on things that developers should be doing and should not be doing.

Once SonarQube has reviewed the code and there are no major issues that have been reported back to the developer, then when the internal and external reviews occur, they can focus on being more value add. Looking at the big picture.

Of course, to be able to review the quality of an ongoing outsourced project, it needs to be ongoing, so the contract between the parties needs to:

Firstly, ensure that the customer can have regular access to the project source code, so that it can be reviewed.

Secondly, the project needs to be made up of multiple milestones. You will not be able to manage the quality of the projects deliverables if there is just one deliverable.

http://searchcio.techtarget.com/news/1375782/Managing-application-development-outsourcing-risks

Managing risk by setting expectations and intermittent milestones up front

Once you have a tool such as Sonarqube in place, which can automatically analyse the code being developed by your outsource development team, there are a few more steps to look at.

How can you make the triggering of the analysis automatic, part of the procedure?

There are “Continuous Integration(CI)” tools out there to help with this.

https://www.thoughtworks.com/continuous-integration

Continuous integration – the practice of frequently integrating one’s new or changed code with the existing code repository….. such that no errors can arise without developers noticing them and correcting them immediately.

There are many choices:

TFS

Jenkins

Bamboo

Teamcity

Cruise control

Once you have picked a CI tool, you can integrate the CI tool with the version control system you picked and with SonarQube to create a “build breaking” pipeline.

The “build breaker” in SonarQube works in conjunction with the CI server to warn interested parties when the quality of the code drops below a a set threshold.

So the process works like the following.

1. The outsourced developer writes some code and checks it into version control so that you as the owner of the code can see what has been done.

2. The CI server sees that there has been some code changed, and runs the SonarQube analysis against the code.

3. If the quality of the code is good, then nothing is reported. All good.

4. If the quality of the code is questionable, you and the developer (and anyone else who is interested) will receive an email warning that the code quality has fallen.

5. The developer then fixes the code so that it passes the quality checks.

6. You come into work in the morning, check your “Fisheye” console to see what has been added to your version control by your outsourced developers.

7. You then open your email and read any reports on “Failed code quality”, you then look for an email that “code quality is good again”. If there is no “code quality is good again”, you jump on the phone and ring your outsourced software manager to find out what happened with the code quality and ask them when it will be fixed.

Next steps

The next logical step is to revisit the quality and coding standards that the developers are working towards.

Are they realistic ? Have you set the bar to high initially where you end up delivering small amounts of perfect code.

Are the quality reviews thorough enough ?

Are the reviews too rigid and are impeding creativity ?

All these are questions we can ask as we progress through our project, and are far easier to ask during a project then they are to try to work out during the autopsy of a failed project.